下载yolov5n模型到~/yolo_projects/yolov5

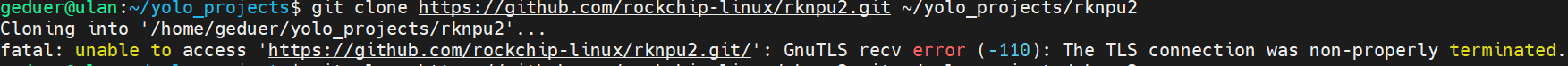

git clone https://github.com/ultralytics/yolov5.git ~/yolo_projects/yolov5有时执行git clone 命令克隆仓库会出现如图所示的超时现象,多试两次就可以了

在幽兰通过 pip install 安装并推理

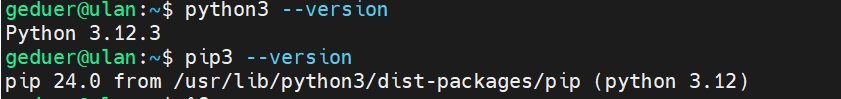

安装 Python3 和 pip3。

sudo apt-get install python3 python3-dev python3-pip可以通过下面命令检查是否安装完成:

# 检查 Python 版本 python3 --version # 检查 pip 版本 pip3 --version

- 克隆 rknn-toolkit2 仓库到 ~/yolo_projects 目录

git clone https://github.com/rockchip-linux/rknn-toolkit2.git ~/yolo_projects/rknn-toolkit2- 进入rknn-toolkit2工程目录

cd ~/yolo_projects/rknn-toolkit2安装必要相应版本的依赖包。

下载项目的依赖管理文件requirements.txt

wget https://gitee.com/mofeitekk/yolov5n.rknn/raw/master/requirements.txt?raw=true -O ~/yolo_projects/rknn-toolkit2/doc/requirements.txt安装Python 依赖包 依赖包

pip3 install -r doc/requirements.txt --break-system-packages安装 RKNN-Toolkit2 依赖库

cd ~/yolo_projects git clone https://github.com/rockchip-linux/rknpu2.git cd rknpu2/runtime/RK3588/Linux/librknn_api/aarch64 sudo cp ./librknnrt.so /usr/lib/

在幽兰部署模型

创建测试目录,下载depoly.py

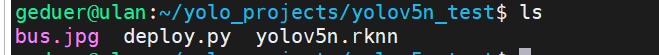

mkdir ~/yolo_projects/yolov5n_test cd ~/yolo_projects/yolov5n_test wget https://gitee.com/mofeitekk/yolov5n.rknn/raw/master/deploy.py?raw=true -O deploy.py下载yolov5n.rknn

wget https://gitee.com/mofeitekk/yolov5n.rknn/raw/master/yolov5n.rknn?raw=true -O yolov5n.rknn- 把rknn-toolkit2/examples/onnx/yolov5/bus.jpg拷贝到当前目录(~/yolo_projects/yolov5n_test)下。

cp ~/yolo_projects/rknn-toolkit2/rknn-toolkit2/examples/onnx/yolov5/bus.jpg ./当前目录如下(~/yolo_projects/yolov5n_test):

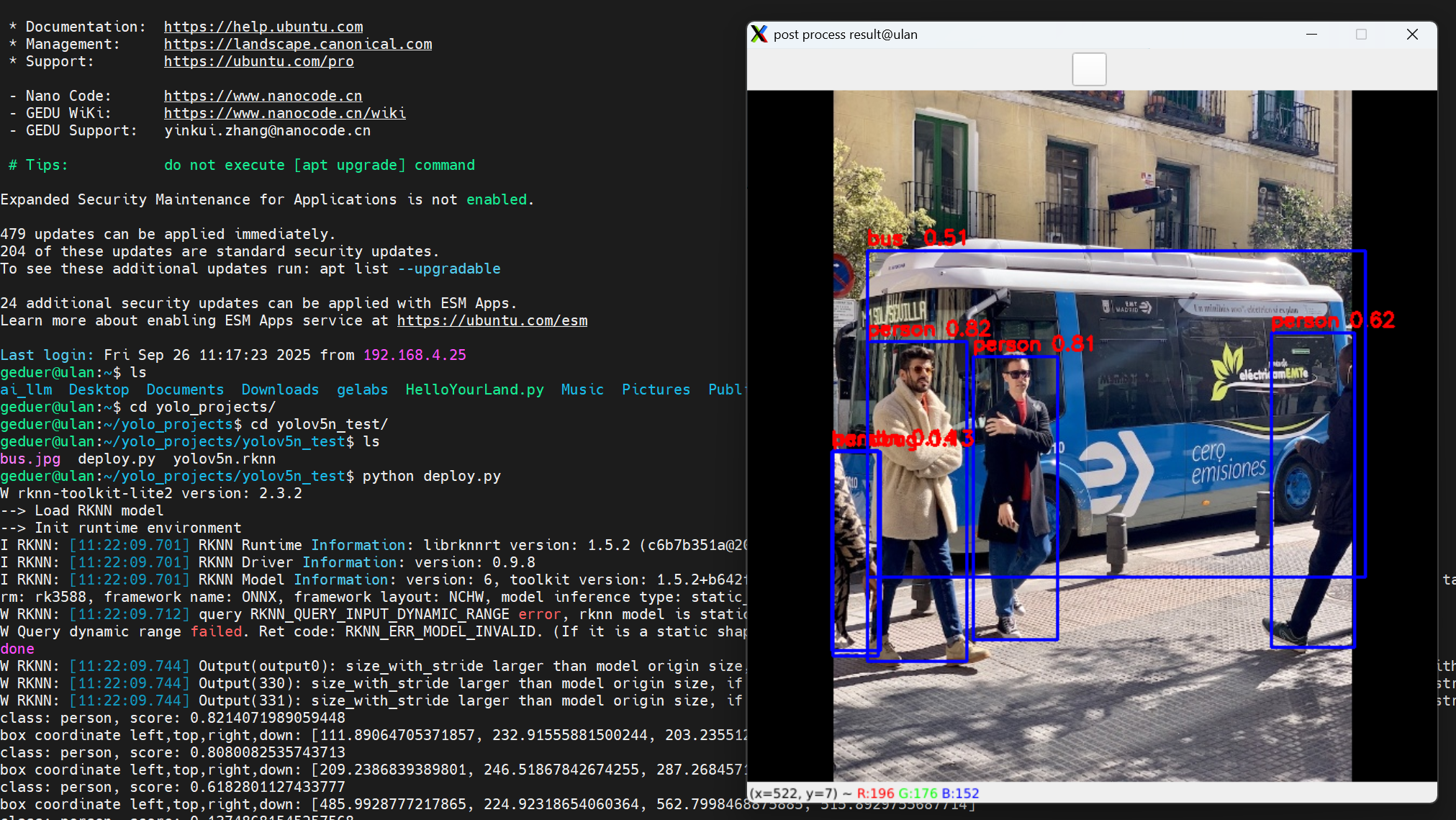

然后执行如下命令:

python deploy.py成功识别图像

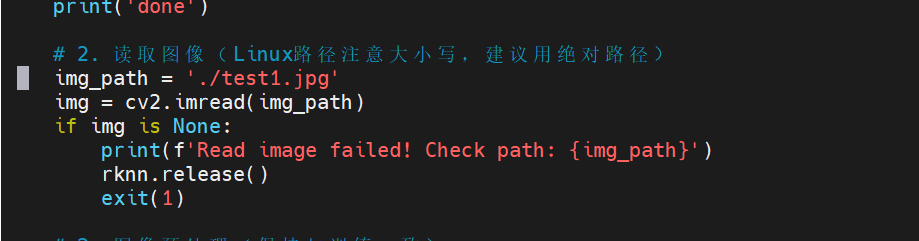

- 切换识别的图片

在脚本184行指定了识别的图片的路径,上传需要识别的图片,然后修改路径即可

vim deploy.py

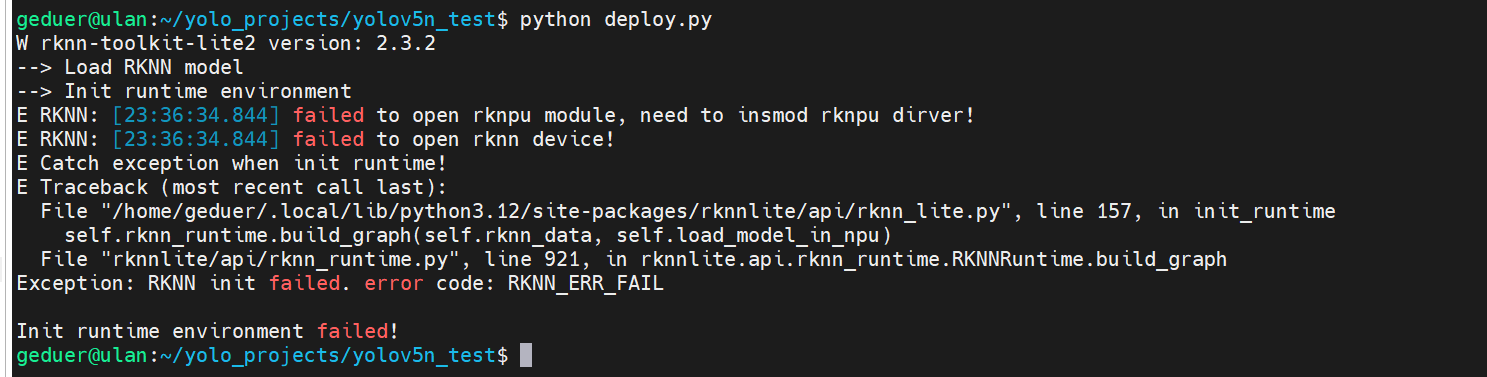

如果运行deploy.py时遇到如图问题,这是rknpu驱动未成功加载:

解决方法,重启幽兰,重新加载驱动,然后再次运行deploy.py

sudo reboot作者:admin 创建时间:2024-12-27 09:05

最后编辑:郭建程 更新时间:2026-03-15 21:50

最后编辑:郭建程 更新时间:2026-03-15 21:50